AWS re:Invent 2023: Gen AI Steals the Show

Highlights and kye learnings of my week at AWS re:Invent 2023 conference.

It’s been a fun week here at Last Vegas at the AWS re:Invent conference. I am usually on the other side, hosting our customers at Dreamforce. I enjoyed taking a back seat.

Where did I spend most of my time? Not surprisingly, I learned about AWS Gen AI's latest offering. They did not disappoint. I also focused on channel partners and attended the AWS Partner keynote, which was packed with announcements (more below).

Let’s dive into the key highlights of my week.

Build a cost-effective conversational agent with Llama 2

In this session, Kamran Khan (AWS) and Philipp Schmidd (Huggin Face) showcased how to build a cost-effective conversational agent with the Llama 2 model on Hugging Face using AWS Trainium and AWS Inferentia.

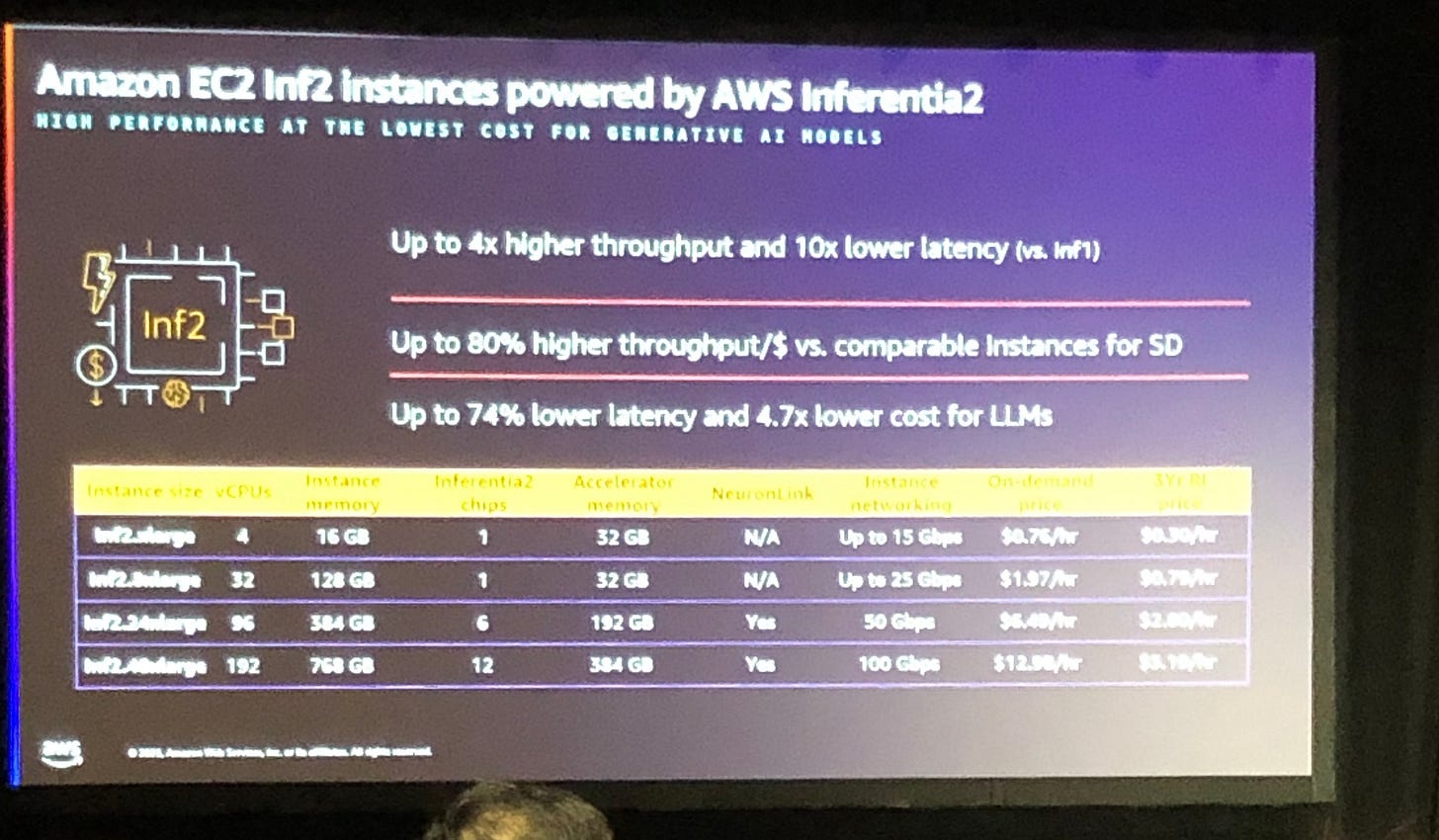

The presenters shared insights into the latest performance benchmark for Trainium and Inferentia 2:

Inf2 offers 4x higher throughput and 10x lower latency than Inf1

Trainium offers 50% cost savings to fine-tune your LLM

Trainium is offered “A La Carte”: 4 to 128 CPUs, 1 to 16 trainium chips, from 10 Gbps to 1600 GBps of network bandwidth (yes, you read that correctly), from $1 to $13 and hour

SageMaker lets you train and fine-tune your model. It can log discussion (prompts and answers), which comes in handy for future data labeling and re-training. While few may want to fine-tune models, performing data mining on LLM conversations and use it to enrich your test dataset can be very efficient (be careful with PII).

Neuron SDK lets you build your services on top of AWS Trainium and Inferantia. It natively supports PyTorch, TensorFlow, OpenXLA, OctoML, Weights & Biases, Hugging Face, and Ray.

AWS is committed to providing choice to customers beyond their own offerings, and as such, they integrate with close and open-source LLMs. They partner closely with Hugging Face, the leading open-source LLM community.

Philippe Schmid, technical LLMs lead at Hugging Face, shared interesting statistics. Hugging Face has 400K models, 80K datasets, 200K demos, and one million downloads. Their open LLM leaderboard features the most prominent open-source LLMs (pre-trained models or fine-tuned). They have a similar leaderboard for embeddings, called MTEB. I am personally a huge proponent of Hugging Face, which promotes cross-collaboration and the advancement of AI research within the community.

Hugging Face offers Optimal Neuron to interface between the Hugging Face Transformers library and AWS Accelerators, including AWS Trainium and AWS Inferentia.

Data patterns for Gen AI applications

AWS data scientists Vlad Vlasceanu and Siva Raghupathy spoke at this session. They delivered insights into effective data strategies to deliver domain-specific experiences. It was a bit theoretical, but I left with some good nuggets:

Building high-quality data catalog helps capture business constructs and is critical to the quality of the Gen AI app

Semantic context is needed to extract meaning out of the data; this is what helps ground the LLM and provide better answers

Kandra is AWS’s ML-based search service to query documents; you do not have to store them in a vector database. There was no mention of request throughput and latency.

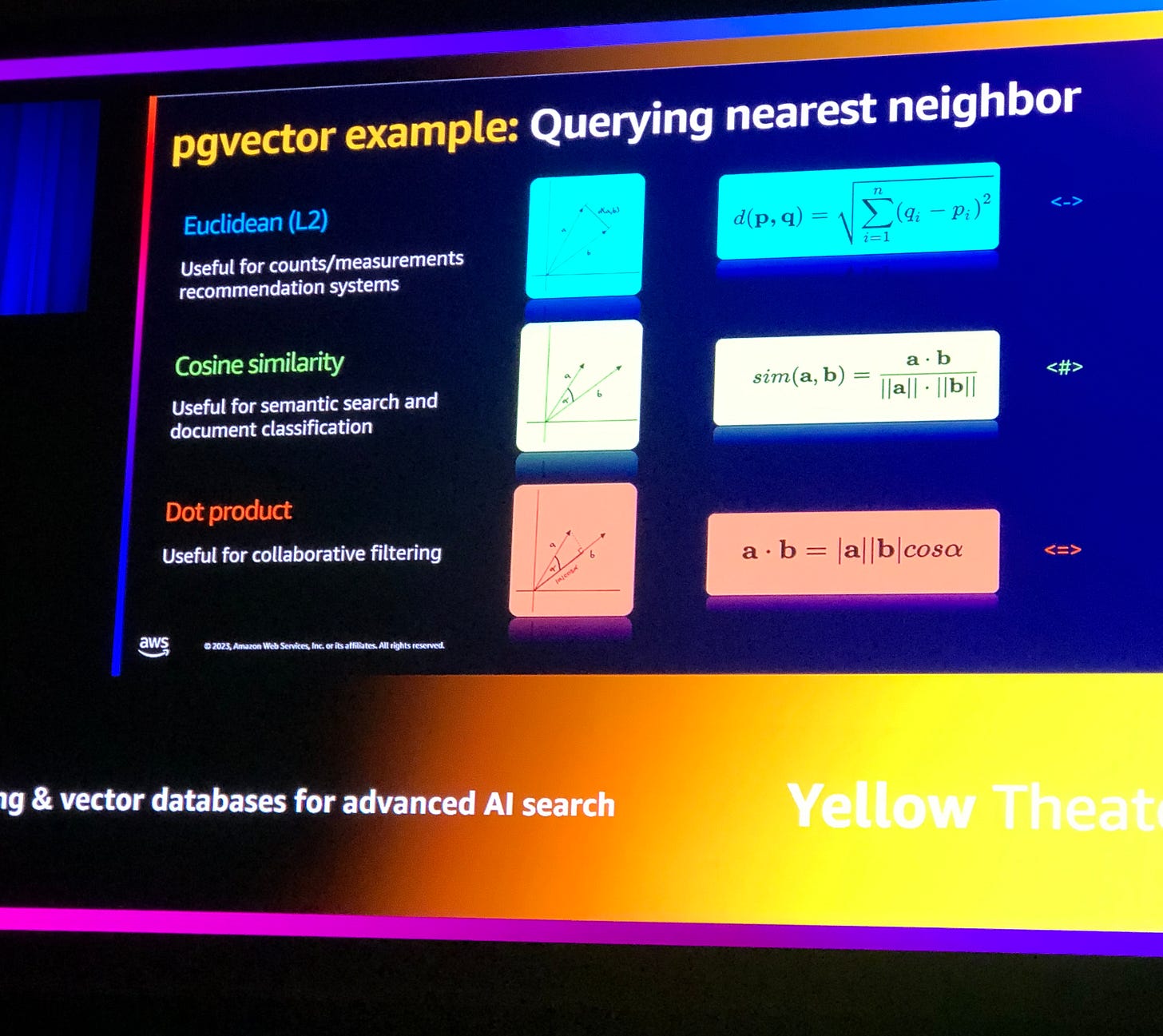

Besides Kandra, you can implement RAG with Aurora/PostgreSQL or the AWS OpenSearch Service

The top KPIs for RAG include Queries Per Second (which measures the throughput) and the Recall Rate. Since most RAG implementations use a “proximate search” algorithm instead of an “exact search” for performance reason, Recall Rate lets you measure how good your search results are. Recall Rate = # correct answers / # queries. You want to shoot for >99%.

RAG is also very useful to respond to changes in data; e.g., RAG can help you push to the LLM new information after a release where you updated an existing feature. The KM lifecycle is usually an afterthought, and RAG has a role to play here.

Governance is critical; it includes data security and compliance of prompts, completions of flows, who is allowed to ask, see, and respond, data quality, and responsible AI

Drive ML strategies with data extracted from chat messages

Critical business data is often buried in chat messages. This session explored challenges, solutions, and architectures for analyzing chat messages using NLP from a real-life example implemented at BP UK. I was intrigued by the topic because my team is working on a Gen AI admin assistant app at Salesforce, and I think our app could benefit from chat messages from cases stored in Salesforce CRM.

Key learnings:

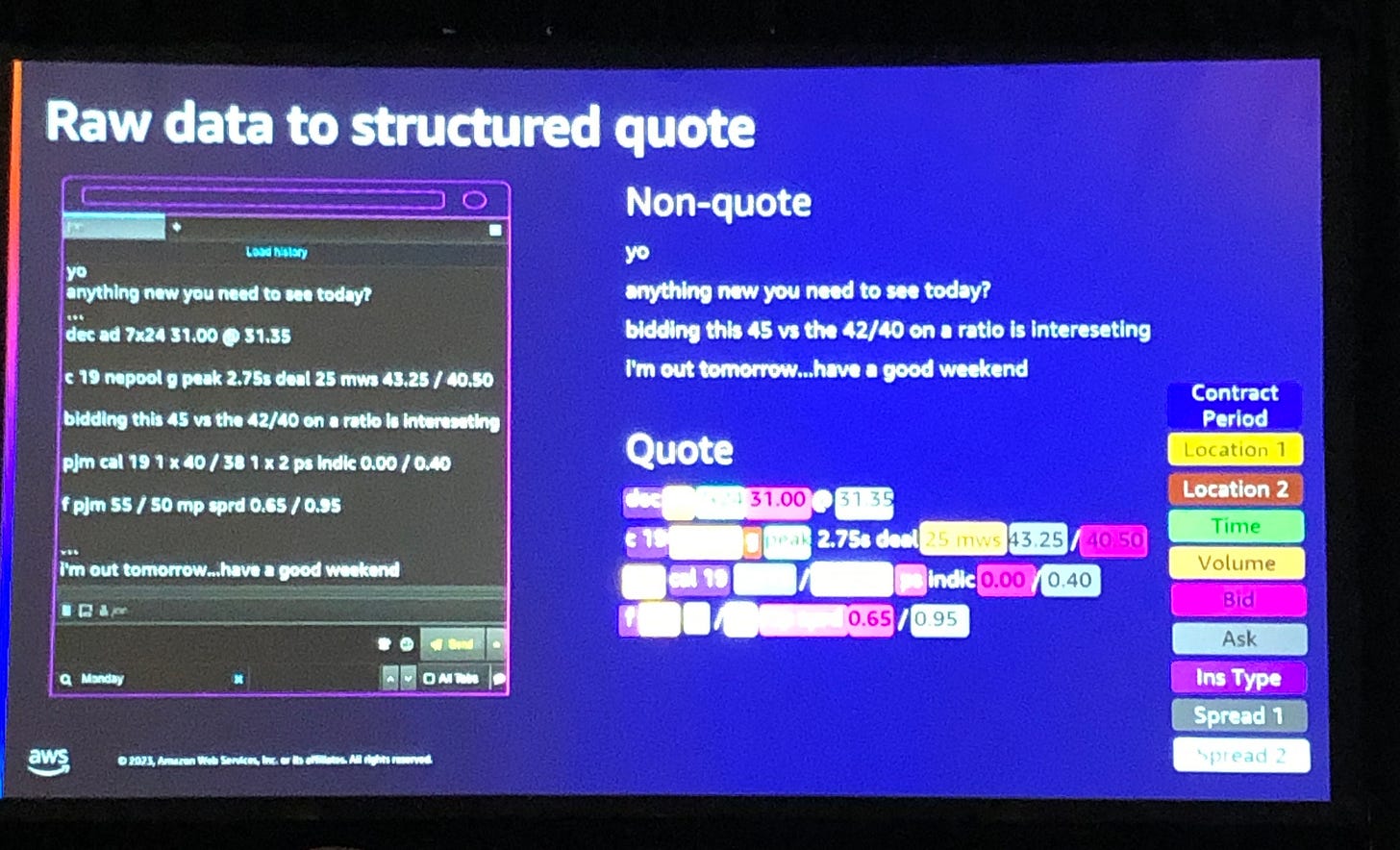

use case: power traders chat with buyers, discuss in chat about derivatives and future prices and negotiate the trade

the trade chat captures a lot of information, mostly domain-specific, with acronyms that make it impossible to understand for the untrained individual

Data enrichment takes place during the classification step: the app tags and map words and acronyms in the chat to entities model; f.i.: the data for the quote, the spread (bid/ask), the actual offer, the delivery location, etc

Solution architecture:

Data ingestion and preparation (extract data or metadata of interest)

Classification service, e.g. separate quotes vs non-quotes messages; includes entity extraction

Post-processing to ”normalize” and standardize data based on similarities, type of quote, etc

Storage in a database

BP started with one commodity trader back in 2018 and slowly expanded

While they do not offer LLM for real-time conversation, they use it internally to equip brokers with a power app to learn about most efficient trading strategies and derive best practices; it helps their brokers come up with competitive quotes and preserve margin

There was no PII data in the chat, which made it easier to ingest and process the chat data

Building an AWS Solution Architect Agent with Amazon Bedrock

This session showed how you can build a solutions architect agent on AWS Bedrock. Banjo Obayomi and Olivier Leplus came well prepared with end-to-end demos and spent lots of time showing us how things work behind the scenes.

Key highlights:

They walked us through three Gen AI use cases (query documentation, generate code, and create diagrams), where the Agent performs a variety of tasks

Agent setup workflow:

creates an IAM policy for the Agent service; the Agent will call Bedrock at runtime and get access via the IAM policy

create the “Agent” in IAM console and assign the IAM policy previously created

define system prompt for the Agent and test in Bedrock console

Query documentation:

use case: a few lines of code to ingest AWS well-architected framework documentation and turn it into embeddings stored in AWS

in a funny twist, they used Claude v2 for the chatbot to capture user prompt and AWS Titan for prompt responses

they showed how you can connect to managed RAG services on Bedrock (Redis, Pinecone, and AWS OpenSearch)

One of the big advantages of using their own KB is source attribution, i.e., you can provide links to documents that support the answer, similar to Bing Chat. This greatly helps build customer trust!

The coding use case was implemented with Code Whisperer

Diagram generation use case:

They trained their model with a few high-quality AWS solution architecture diagrams

The Agent service uses APIs from OpenAPI to identify the intent of a prompt request; it’s a smart approach I was not aware of. It seems this service describes the APIs available to the Agent to perform tasks; at runtime, the LLM “understands” which tasks (APIs) he can perform and uses this information to map user intent to tasks.

The mapping of Agent service to APIs takes place in Bedrock console; you can name your “API action" and link it to the OpenAPI JSON file with a list of APIs and metadata

Overall, I found that AWS has built a compelling platform stack to develop, test, and run agents. They follow a low-code approach and stay open by integrating with non-AWS services. It is built on solid foundations that will keep improving over time.

AWS Partner Keynote with Dr. Ruba Borno

As someone who has owned Salesforce PRM for years and has been living and breathing the channel partner world for years, this session was much awaited. AWS did not disappoint. The session,led by Ruba Borno, was packed with announcements that delighted the room.

AWS and Salesforce announced a partnership to expand Salesforce Marketplace listings to the AWS Marketplace.

AWS and ServiceNow announced a partnership to sell ServiceNow SaaS offering on AWS marketplace

New tools for Solution Building enablement, re-emphasizing the growing complexity for partners to sell to customers in need of (corss vendors) solutions instead of individual apps

Multi-partner engagement tools such as partner matchmaking, standardized processes, and GTM for solution building with a formal Strategic Collaboration Agreement (SCA)

Curated partner programs for ISVs; improved experience in Partner Central, simplified program requirements, better co-sell and GTM motions for ISVs

Enhanced Migration Acceleration Program (MAP)

Expanded benefits for resellers; Foundational Technical Review (FTR), Customer Engagement Incentive (CEI), Public Sector Discount, simplified incentive structure with the Partner Growth Rebate (PGR), renewed Partner-Led Support, and new AWS Diagnostic Tools.

AWS PartnerEquip is a new benefit for partners to build and promote specialization on the AWS marketplace. According to Canalys, the leading channel analyst firm, 83% of cloud customers highlight specializations as a top three criteria for their partner selection (full report here).

A reduction in listing fees

A unified experience across AWS Marketplace, programs, and Partner Central

New Partner Central tools to drive partner productivity (partner dashboard, streamlined onboarding, co-selling and pipeline management, partner dashboards)

It truly felt like Christmas came to town early this year! Kudos to the AWS team and their drive to deliver a simplified and streamlined partner experience across their partner sites.

AWS re:Invent Keynote

You can watch the whole keynote here. I am very excited about the Amazon Q announcement. Adam Selipsky pitched it as a Gen AI assistant for the workforce to get insights into business data and drive employee productivity. Amazon Q is built with security and privacy in mind.

Given AWS’s Gen AI stack, I can see Amazon Q becoming a powerful assistant for both IT and business workers. With AWS moving into agents, we could soon see Amazon Q offer both knowledge and task completion.

What Else Caught My Attention

It was my first time attending the AWS re:Invent conference, so every experience was new to me.

The quality of catering: the food for lunch was really good! And this is coming from a Frenchman with high standards. Who would have thought? Warm meals with sweets for dessert (my weakness) with a logistic perfectly executed to move thousands of attendees in little time!

Clocking 20K+ steps a day: I do not track my daily steps, but one attendee told me he clocked over 20K steps in one day. I knew Las Vegas casinos were big; I did not know that conference centers were so big and located deep behind each casino. You need to arm yourself with patience.

Multi-site configuration: AWS spread the conference across four or five casinos. Going from one casino to another costs you about two hours because sessions are every 30 minutes, you need to be there early, walking is slow, and taking the shuttle is slow (tried it once and gave up). Monorial worked better for me. I ended optimizing my days on a single site whenever possible

Multicast sessions: AWS setup a few big rooms with four huge screens where they played live sessions hosted in other locations; you can simply walk in and grab a headset. I found it handy and it sometimes beat the in-session experience (more space, no waiting time, better pictures)

Expo Center Invaded With AI: no surprise here; the Expo Center had a strong AI smell; what a difference a year can make! It seems that every single vendor has nailed it down. I even talked to a small 100-person startup that can do GenAI across all software platforms and coding languages and provide LLMs for all industries. If only I was ten and kept my candidness. 😊

Conclusion

I truly had a blast at AWS re:Invent and hope I will make it back next year. AWS is bringing a lot of great innovation to the field of AI. More importantly, they have a partner strategy that sets them apart—only Microsoft plays in the same league, with Google remaining behind. AWS strategy to support competing vendors on their platform and embrace the open source model is a win-win for AWS, their partners, and customers.